LILI Lab

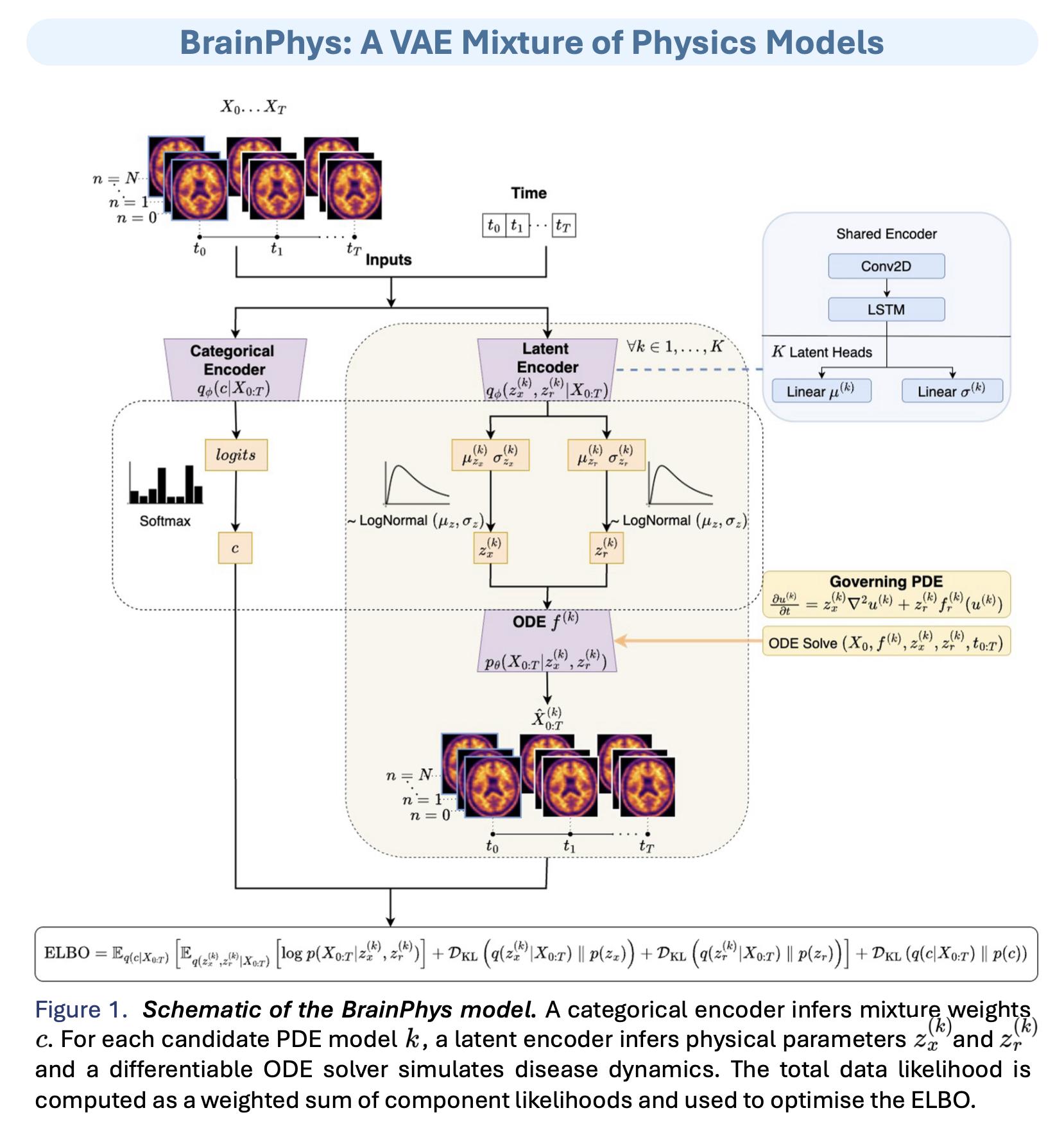

We are the Learners of Interpretable Latent Information (LILI) Lab, based in the Department of Informatics, University of Sussex. We create interpretable ML models for ”inverse problems”, where the information of interest is not directly measurable.

We aim to build models that are:

- Useful, providing meaningful insights to our interdisciplinary collaborators.

- Aware of uncertainty and communicate this.

- Contributing trustworthy tools for healthcare applications

What We Do

Opportunities

Ways to work with us or collaborate:

- Working in our lab: expectations, skills, and resources for joining.

- Collaborating with us: how we scope projects with partners and keep work reproducible.

Our Research Themes

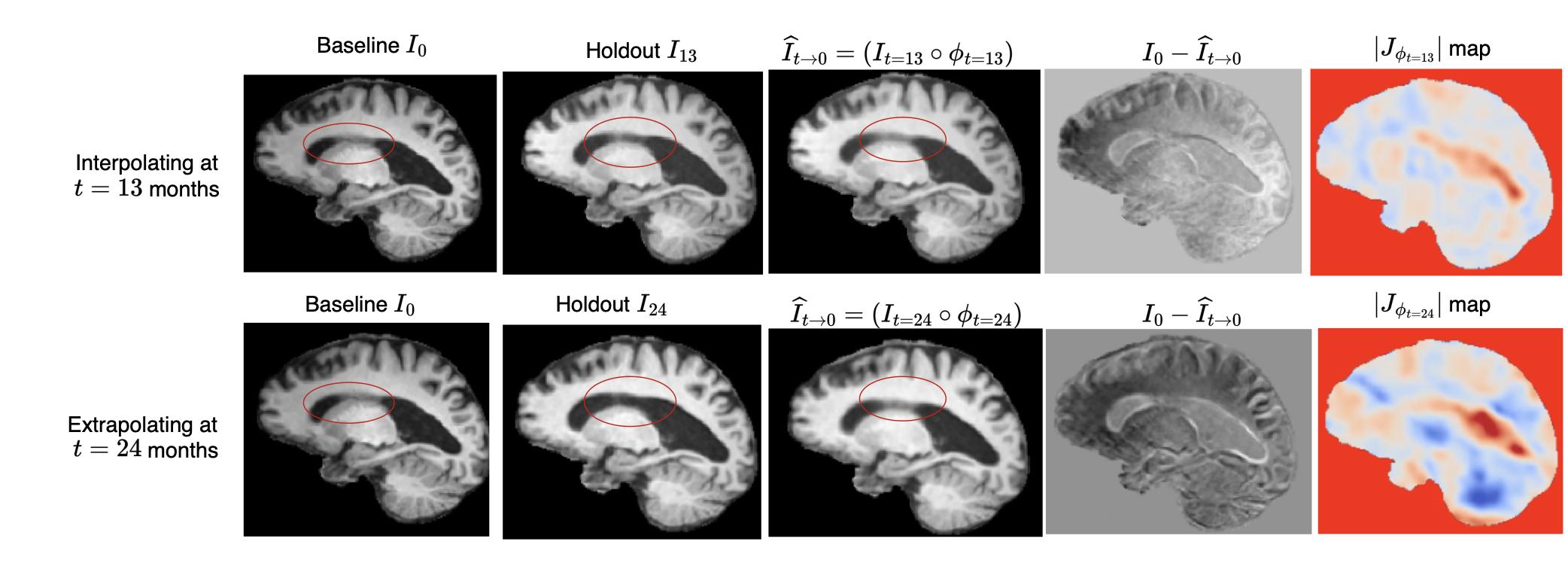

Medical Image Analysis

Work on MRI reconstruction, image registration, and related inverse problems.

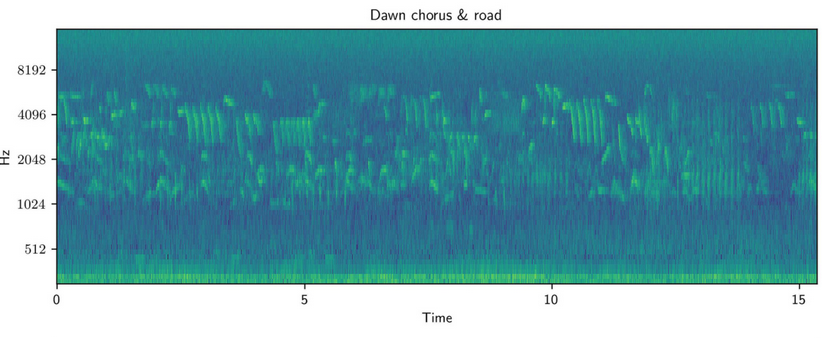

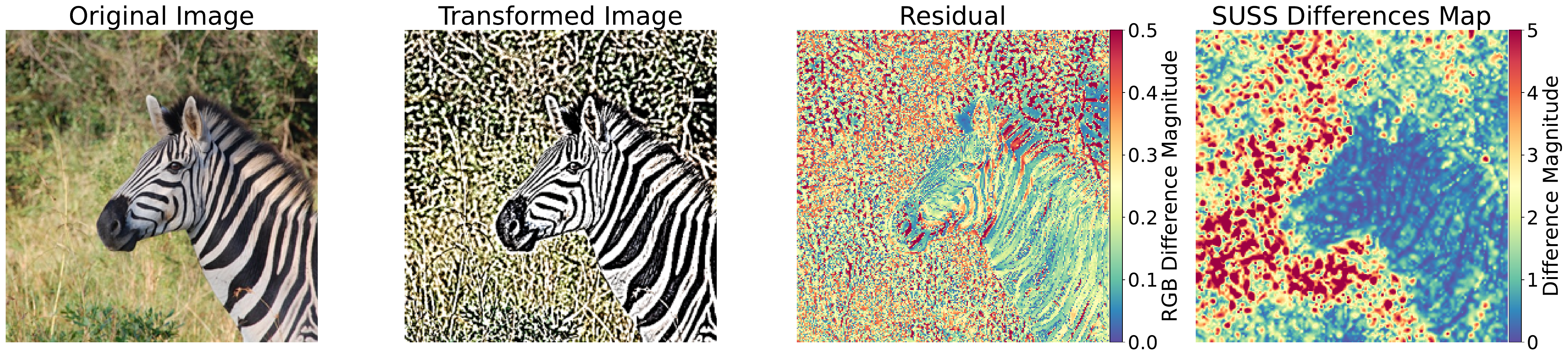

Computer Vision

Projects spanning visual recognition, reconstruction, and interpretable models for vision tasks.