Research Themes

The LILI Lab develops interpretable machine learning and statistical methods for inverse problems, where key information cannot be measured directly. Our work focuses on spatiotemporal data in medical imaging, disease progression modelling, computer vision, and ecological monitoring.

Explore our four main research themes:

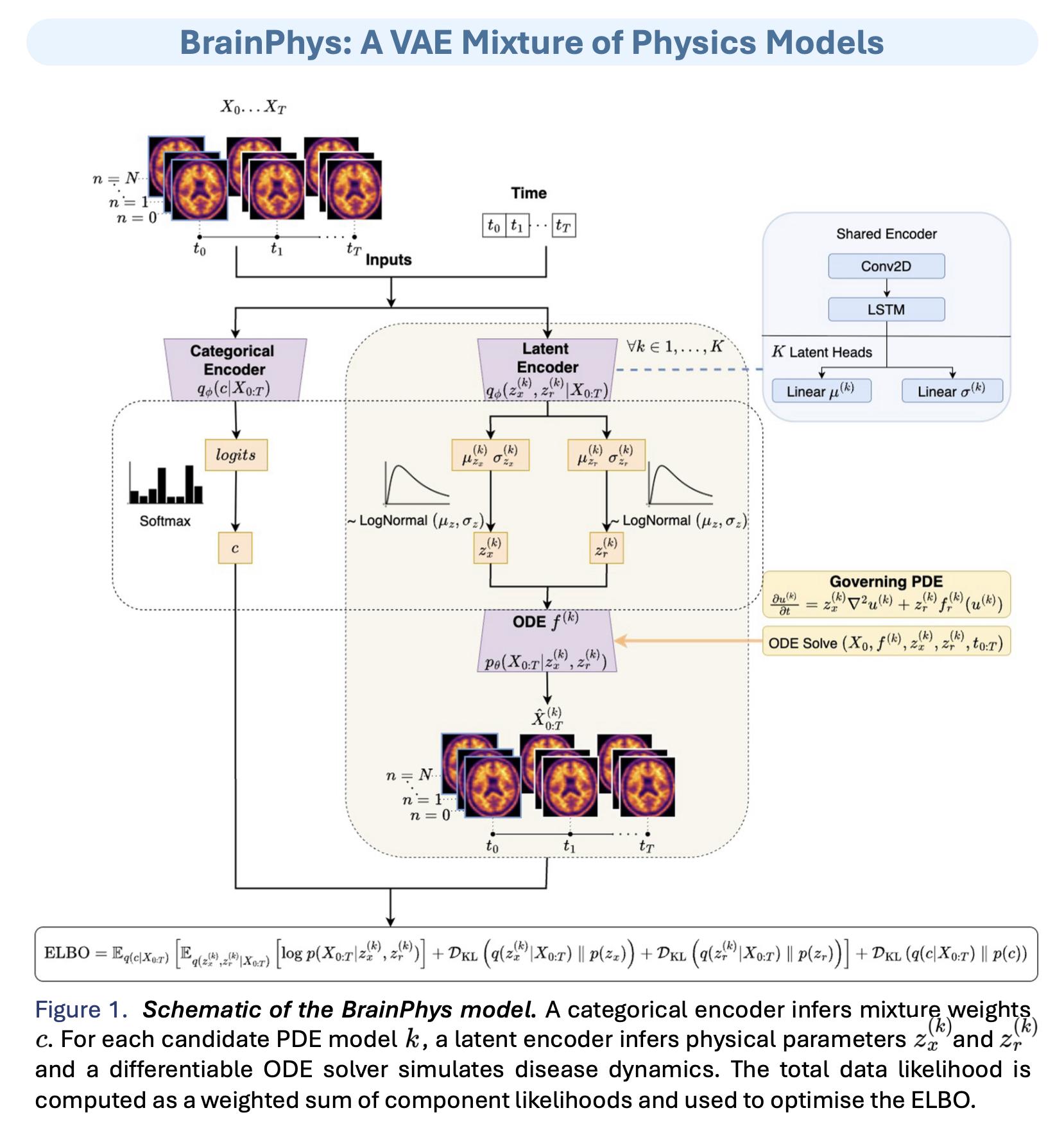

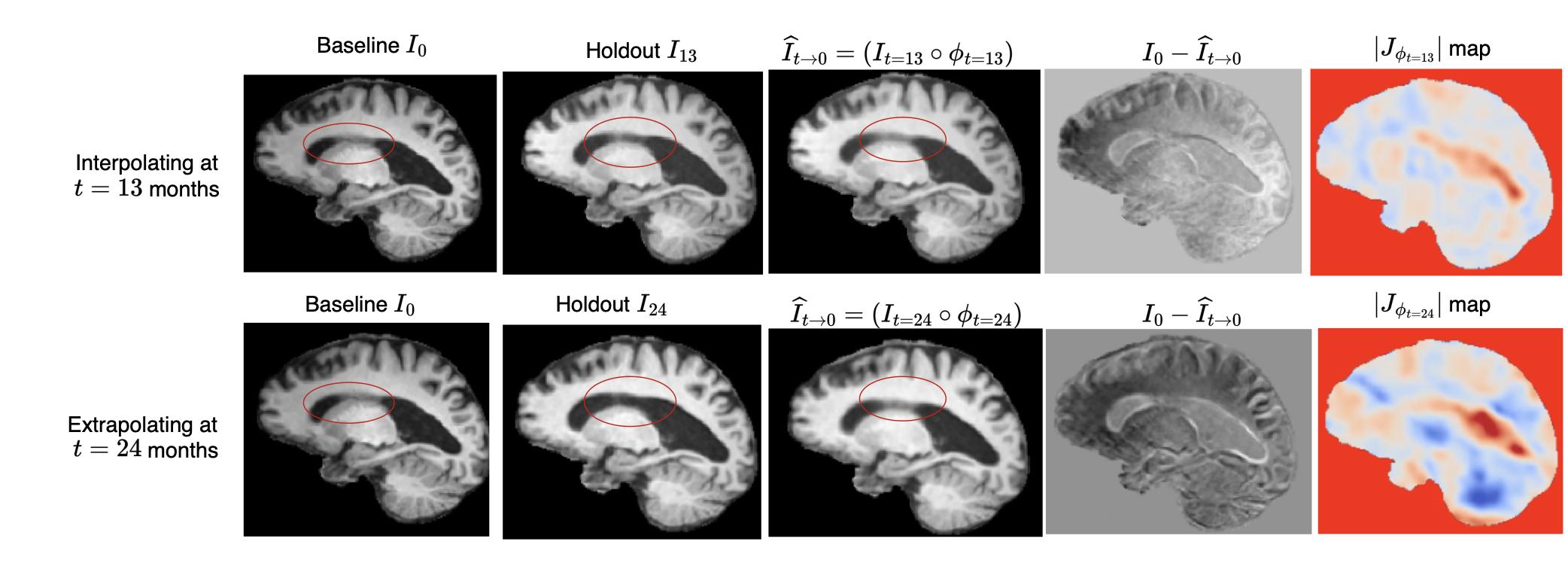

Medical Image Analysis

Work on MRI reconstruction, image registration, and related inverse problems.

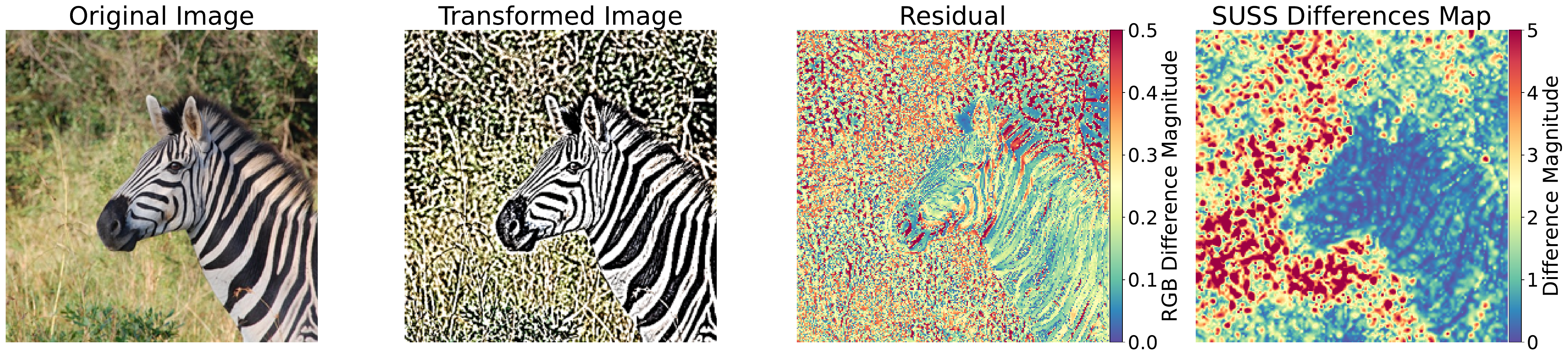

Computer Vision

Projects spanning visual recognition, reconstruction, and interpretable models for vision tasks.